eXtended reality for Rehabilitation

Focus Area(s): Smart living: rehabilitation, ergonomics, postural analysis, exercising

Scenario description:

The aim of this Pilot is to demonstrate the transformative potential of Extended Reality (XR) technologies in the rehabilitation of patients with orthopedic, neurological, and oncological conditions. the pilot’s central ambition is to enhance patient motivation, engagement, and recovery outcomes by blending immersive digital environments with real-time physiological monitoring and feedback. Physiotherapy after orthopaedic surgery and other kinds of upper limb motor impairment such as “frozen shoulder” or severe hand arthritis is crucial for complete rehabilitation but is often repetitive, tedious and time-consuming. Actually, in order to achieve a motor recovery a very long physiotherapy treatment, sometimes more than 50 sessions, is needed.

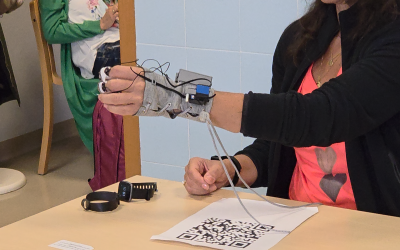

The pilot evolved around a novel XR-based rehabilitation platform, integrating motion and electromyographic (EMG) sensors, wearable haptic actuators, and AI-driven feedback mechanisms. These components create a supervised yet personalized environment, where both patient and therapist can monitor performance and adapt therapy dynamically. The platform was applied to two clinical use cases: upper limb rehabilitation (focusing on shoulder pathologies and lymphedema following breast cancer treatment) and lower limb rehabilitation, targeting post-surgical knee recovery.

In the upper limb scenario, patients wore an augmented reality headset (HoloLens 2) and interacted with 3D virtual objects in a “pick-and-place” exercise framed as a serious game. Guided by an avatar demonstrating correct movements, patients practiced repetitive goal-oriented tasks with real-time visual and haptic feedback that indicated errors or compensatory movements. The lower limb scenario adopted a more autonomous setup, where patients followed their mirrored 3D avatar performing squats, seated extensions, and walking exercises, receiving immediate feedback on posture and accuracy.

The first validation phase, conducted at Versilia Hospital, involved healthy volunteers to test system usability, comfort, and functionality. Results confirmed strong engagement and ease of use, though users highlighted areas for improvement such as glove fit and avatar accuracy. These insights guided system refinements before advancing to clinical testing.

The final validation phase involved six real patients undergoing upper or lower limb rehabilitation. Clinical assessments, including the DASH, Oxford Knee Score, and WOMAC scales, were administered alongside physiological measurements. Patients reported high satisfaction, comfort, and motivation, appreciating the immersive feedback and interactive exercises. Clinically, modest but measurable improvements were observed in upper limb function and lymphedema reduction. Although lower limb results showed no immediate functional gains consistent with the longer recovery time of such conditions the system was well accepted by patients and clinicians alike.

Overall, Pilot 20 successfully demonstrated the feasibility and therapeutic value of XR in clinical rehabilitation. It showed that immersive, sensor-driven environments can increase patient engagement, personalize recovery pathways, and strengthen the therapeutic alliance between clinician and patient. The evolution from prototype to clinical validation marks a significant step toward integrating XR technologies into standard rehabilitation practice offering a vision of future care that is both technologically advanced and deeply human-centered.

On YouTube SUN channel you can watch a video showing the validation performed in Versilia Hospital.

Technical challenges:

:

● Real Physical Haptic Perception Interfaces

● Non-invasive bidirectional body-machine interfaces

● Wearable sensors in XR

● Low latency gaze and gesture-based interaction in XR

● 3D acquisition with physical properties

● AI-assisted 3D acquisition of unknown environments with semantic priors

● AI-based convincing XR presentation in limited resources setups

● Hyper-realistic avatar

Impact:

This use case aims to highlight the integration of innovative technologies (multimodal sensors, AI technology, XR, smart appliances) into rehabilitation and supervised exercising under specialist feedback/supervision in the home environment, in order to achieve a higher compliance level of the participants. Real-time monitoring through camera, sensors and wearables, XR (avatars), augmented visual feedback, AI algorithm and therapist’s perspective are all innovatively combined and delivered to the patient through the most familiar means, a smartphone or any other smart appliance, aiming for the increased compliance level. The successful result of this use case will allow planning a fully integrated XR approach into remote rehabilitation and exercising, using full body data. This aims to be the future of remote rehabilitation and exercising for special populations.

Latest News

OAC participation at the SUN Pilot 1 validation

After years of preparation and development towards the practical application of our systems and platform, it was finally time to put everything to the test in an official pilot setting. Together with partners from the SUN Consortium and the local hosts ASL-NO, we...

AI. XR and the patient perspective

1. Introduction Context AI-driven processes are transforming healthcare by enabling new diagnostic methods, personalizing treatments, improving administrative efficiency, and driving research innovations. At the same time, these benefits must be critically evaluated...

SUN Project Shines at HELT Symposium: Pioneering Human-Centered XR for One-Health

The EU Horizon SUN Project (Social and Human-Centered XR) took center stage at the Health, Law, and Technology (HELT) Symposium on April 24th, hosting a groundbreaking workshop titled “Social and...